Role

Solo Full-Stack Developer

Year

2026

Tech Stack

7 Technologies

Status

Completed

Overview

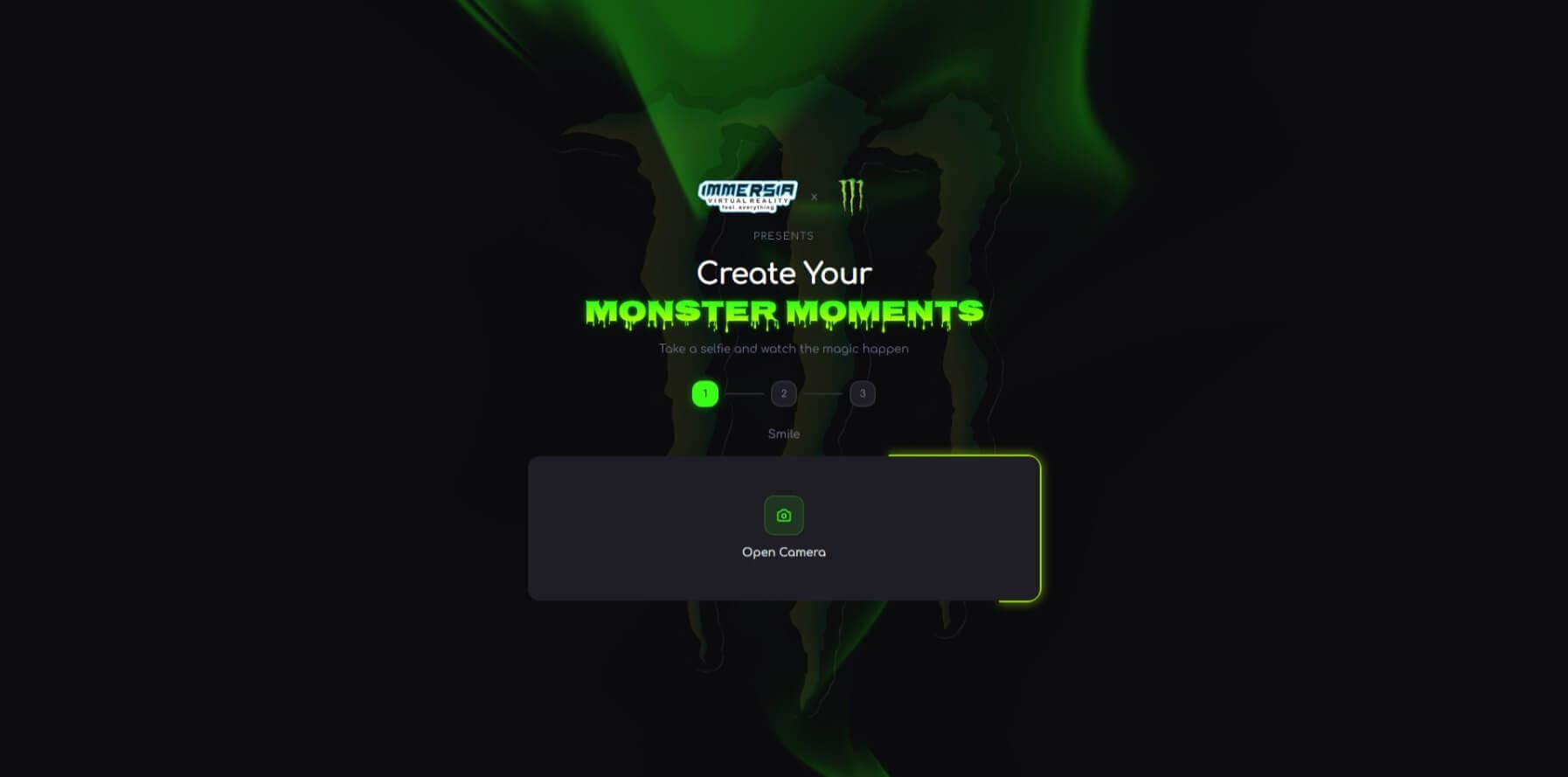

At a Monster Energy x Immersia branded event, every fan needed to walk away with a photorealistic AI-generated video of themselves hugging a celebrity — vertical, ready to post. I built the entire pipeline solo: a fan takes a selfie, picks a celebrity, and gets back an eight-second video in under three minutes. Three AI models work in sequence to composite the image, validate it, and animate it into video. Thousands of videos were generated at the live event.

The Problem

At live brand activations and events, fans want memorable, shareable moments with their favorite celebrities — but celebrities can't physically greet every person. Traditional photo booths feel dated and static. This app lets fans at a Monster Energy x Immersia branded event walk up, take a selfie, pick a celebrity (Poco Lee, Ruger), and walk away with a photorealistic AI-generated video of them hugging that celebrity — a 9:16 vertical video ready for Instagram, shareable via QR code, all generated in under three minutes.

Screenshots

Challenges

Orchestrating three separate AI models into a single reliable pipeline that a non-technical event attendee could use on a kiosk in under three minutes. Each model has different latency profiles and failure modes, and they must execute sequentially.

At a live event booth with a line behind you, an error screen is unacceptable. The pipeline needed to push forward through non-critical failures while only halting on truly fatal ones — a slightly imperfect video always beats a crash.

Generated videos needed to play instantly the moment they were ready, but also be shareable via QR code after the user walks away. Instant playback and persistent sharing are competing requirements.

Solutions

Built a six-stage pipeline orchestrator that streams real-time progress to the client via SSE, with each AI model wrapped in its own retry and error handling logic.

Designed a critical vs. non-critical step system — validation and prompt generation fail gracefully, while only image and video generation halt the pipeline. The system always pushes forward.

Built a dual-path delivery system: video plays instantly via streaming data while a background upload creates a persistent URL for QR-code sharing. The user never waits.

Key Highlights

Three-Model AI Pipeline: Orchestrates image compositing, validation, and video animation in a six-stage pipeline — from selfie to shareable video in under 3 minutes.

Fault-Tolerant Event Design: Non-critical steps fail gracefully while the pipeline pushes forward. At a live booth, a slightly imperfect video always beats an error screen.

Instant Playback + QR Sharing: Videos play the moment they are ready, while a background upload creates a persistent link for QR-code sharing after the user walks away.

Live Event Proof: Thousands of videos generated at a real Monster Energy x Immersia brand activation in Nigeria — real fans creating real content.

Scale & Scope

~4,500

Lines of Code

37

Source Files

4

Pages / Routes

4

API Endpoints

39

Git Commits

Technology Stack

Outcome

Shipped and ran at a live Monster Energy x Immersia brand activation in Nigeria. Thousands of real videos generated by real fans.